YouTube Semantic Search

OpenAI-powered semantic search for any YouTube playlist — featuring the All-In Podcast ?

Intro

I love the All-In Podcast. But search and discovery with podcasts can be really challenging.

I built this project to solve this problem… and I also wanted to play around with cool AI stuff. ?

This project uses the latest models from OpenAI to build a semantic search index across every episode of the Pod. It allows you to find your favorite moments with Google-level accuracy and rewatch the exact clips you’re interested in.

You can use it to power advanced search across any YouTube channel or playlist. The demo uses the All-In Podcast because it’s my favorite ?, but it’s designed to work with any playlist.

Example Queries

- sweater karen

- best advice for founders

- poker story from last night

- crypto scam ponzi scheme

- luxury sweater chamath

- phil helmuth

- intellectual honesty

- sbf ftx

- science corner

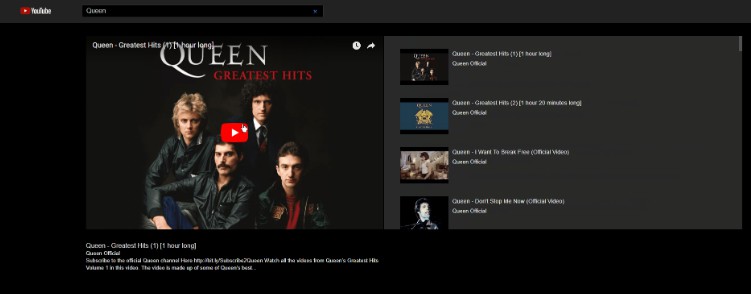

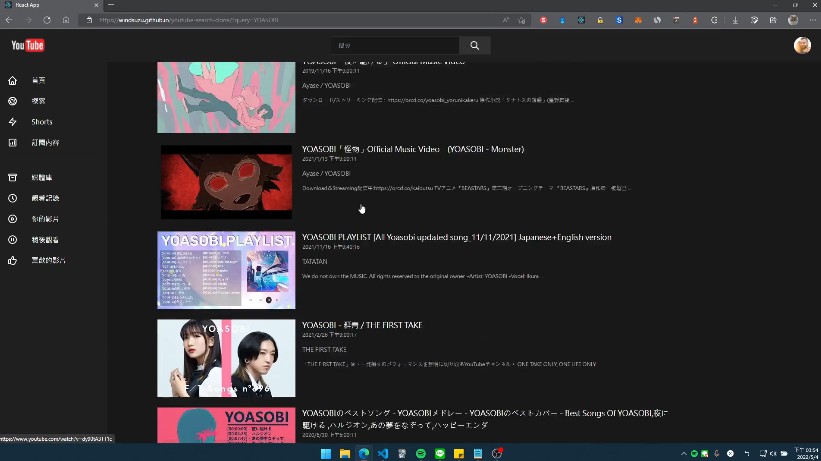

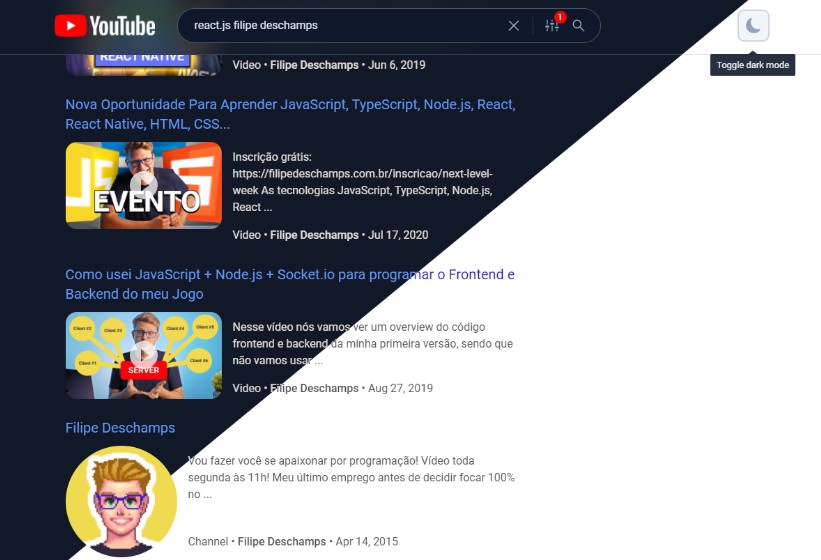

Screenshots

How It Works

Under the hood, it uses:

- OpenAI – We’re using the brand new text-embedding-ada-002 embedding model, which captures deeper information about text in a latent space with 1536 dimensions

- This allows us to go beyond keyword search and search by higher-level topics.

- Pinecone – Hosted vector search which enables us to efficiently perform k-NN searches across these embeddings

- Vercel – Hosting and API functions

- Next.js – React web framework

We use Node.js and the YouTube API v3 to fetch the videos of our target playlist. In this case, we’re focused on the All-In Podcast Episodes Playlist, which contains 108 videos at the time of writing.

npx tsx src/bin/resolve-yt-playlist.ts

We download the English transcripts for each episode using a hacky HTML scraping solution, since the YouTube API doesn’t allow non-OAuth access to captions. Note that a few episodes don’t have automated English transcriptions available, so we’re just skipping them at the moment. A better solution would be to use Whisper to transcribe each episode’s audio.

Once we have all of the transcripts and metadata downloaded locally, we pre-process each video’s transcripts, breaking them up into reasonably sized chunks of ~100 tokens and fetch it’s text-embedding-ada-002 embedding from OpenAI. This results in ~200 embeddings per episode.

All of these embeddings are then upserted into a Pinecone search index with a dimensionality of 1536. There are ~17,575 embeddings in total across ~108 episodes of the All-In Podcast.

npx tsx src/bin/process-yt-playlist.ts

Once our Pinecone search index is set up, we can start querying it either via the webapp or via the example CLI:

npx tsx src/bin/query.ts

We also support generating timestamp-based thumbnails of every YouTube video in the playlist. Thumbnails are generated using headless Puppeteer and are uploaded to Google Cloud Storage. We also post-process each thumbnail with lqip-modern to generate nice preview placeholder images.

If you want to generate thumbnails (optional), run:

npx tsx src/bin/generate-thumbnails.ts

Note that thumbnail generation takes ~2 hours and requires a pretty stable internet connection.

The frontend is a Next.js webapp deployed to Vercel that uses our Pinecone index as a primary data store.

TODO

- Use Whisper for better transcriptions

- Support sorting by recency vs relevancy

Feedback

Have an idea on how this webapp could be improved? Find a particularly fun search query?

Feel free to send me feedback, either on GitHub or Twitter. ?

Credit

- Inspired by Riley Tomasek’s project for searching the Huberman YouTube Channel

- Note that this project is not affiliated with the All-In Podcast. It just pulls data from their YouTube channel and processes it using AI.

License

MIT © Travis Fischer

If you found this project interesting, please consider sponsoring me or following me on twitter

The API and server costs add up over time, so if you can spare it, sponsoring on Github is greatly appreciated. ?